Anthropic shipped Claude Opus 4.7 this week, and the headline number everyone is quoting is the ~13% lift on coding benchmarks over Opus 4.5. I’ve been putting it through real work — not benchmarks — for the last several days, and the honest answer is more interesting than the number suggests.

A 13% benchmark jump doesn’t translate to code that’s 13% better. It translates to fewer of the moments that used to kill my momentum.

Here’s what I’ve actually noticed.

The Errors I Used to Fix by Hand Are Mostly Gone

The class of mistake that Opus 4.7 has clearly shrunk is the small, boring one. Wrong import path. Slightly out-of-date API signature. A useState where I wanted a useReducer. A type definition that works but isn’t the idiomatic one for the library version I’m on.

These are the things I used to catch in review and patch in 30 seconds. Individually they’re nothing. Cumulatively they’re the reason a “10-minute” AI-assisted task became a 25-minute one.

Opus 4.7 still makes mistakes. But the mistakes it makes now are usually interesting — a design choice I’d push back on, not a piece of code I’d throw away.

Tool Use Finally Feels Production-Grade

The improvement that matters more to me than raw coding accuracy is what’s happened to tool-calling reliability.

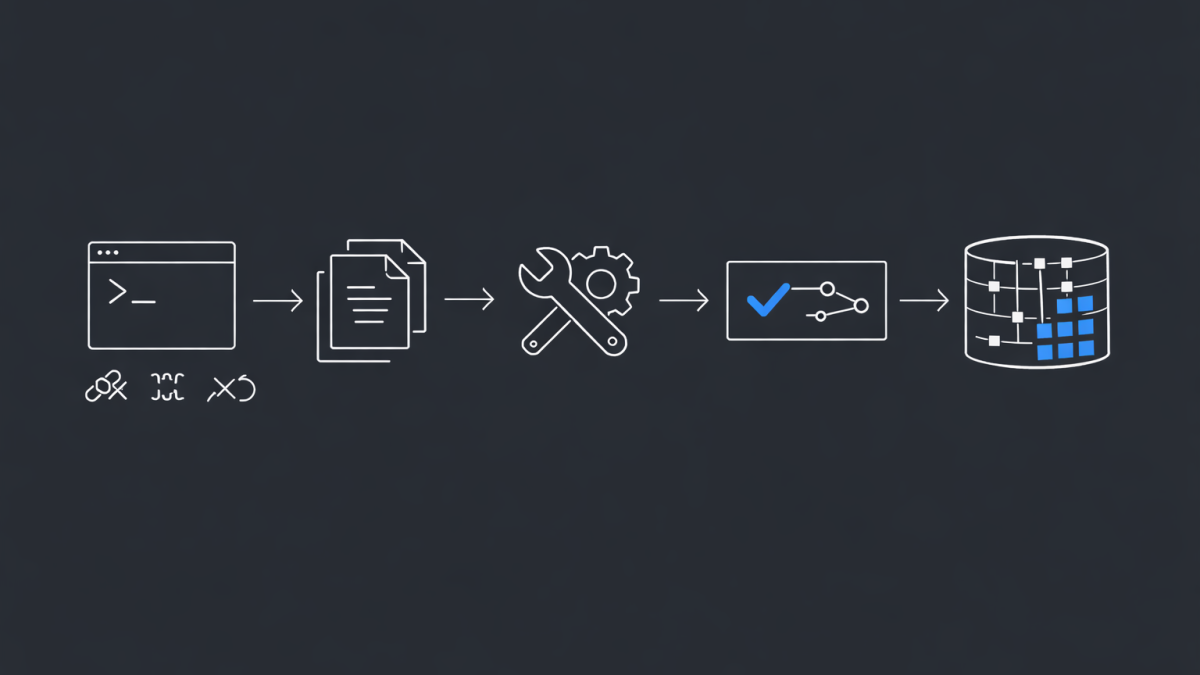

I run a lot of agentic workflows — the model plans, calls a shell, reads a file, edits it, runs tests, loops. With earlier Opus versions, I could feel the seams. The model would forget it had already read a file. It would call the same tool twice with identical arguments. It would declare a task done while one of its own test runs was still failing.

With 4.7, those failure modes have clearly been engineered out. The model holds state across a long tool-use chain much more reliably. It’s more willing to stop and say “I don’t have enough information” instead of improvising. For anyone building real coding agents, that’s the unlock.

Long Context Is Where It Quietly Dominates

Where I see the biggest day-to-day gain is in long-context work.

Feeding the model an entire repository’s worth of files — 200k, 300k tokens of actual code — and asking it to trace a bug across boundaries used to produce confident-sounding nonsense about half the time. Now it either finds the answer or tells me it can’t.

That second behaviour matters more than the first. A model that knows the edge of its own knowledge is a model I can trust with a real codebase.

The Honest Limitations

It’s not magic. Three things Opus 4.7 still gets wrong in my testing:

First, it over-refactors. Ask it to fix a bug and it will often rewrite the surrounding function “for clarity”. I’ve learned to be explicit about scope.

Second, it still struggles with very recent libraries and APIs released after its training cutoff. For anything bleeding-edge, I still hand-feed it the current docs.

Third, in very large agentic loops — dozens of tool calls — it can drift into loops where it keeps trying variations of the same failed approach. Good agent scaffolding still matters. The model doesn’t replace the engineering around it.

What 13% Actually Buys You

Benchmarks are a lagging indicator of something more important: how often the model breaks your flow.

The real measurement I care about is how many of my AI-assisted tasks finish on the first attempt, without me having to intervene, re-prompt, or clean up afterwards. By that measure, Opus 4.7 is the first model where that number feels closer to most than to some.

That’s the version of “13% better” that actually matters. Not smarter on paper. Less friction in practice.

And that’s the threshold where AI-assisted coding stops being a demo and starts being a tool you reach for without thinking about it.

- Vibe Coding Is Dead. Spec-Driven Development Just Replaced It

- AI Agents Keep Filling Gaps With Garbage. There’s One Fix That Actually Works.

- Claude Code Leak: The Real Enterprise Data Exposure Risk

- Microsoft Critique Runs Two AI Models Against Each Other for Better Research. My First Look

- OpenAI Agents SDK vs LangGraph in 2026 What CIOs should standardise on