In this blog post DeepSeek, Moonshot, MiniMax and the Claude Distillation Trap we will walk through what “model distillation theft” actually is, why it’s so hard to prevent, and how Anthropic was able to confidently attribute large-scale extraction of Claude’s capabilities to specific labs.

I’ve spent 20+ years around enterprise platforms—Azure, Microsoft 365, identity, security, and now AI—and one pattern keeps repeating. As soon as something becomes valuable, it becomes measurable, optimisable, and eventually… harvested.

The title of this post, DeepSeek, Moonshot, MiniMax and the Claude Distillation Trap, isn’t about drama. It’s about a very practical reality: if your AI capability is accessible through an API, it can be copied—unless you treat abuse detection as a first-class engineering problem.

High-level first what happened in plain language

Anthropic publicly alleged that DeepSeek, Moonshot, and MiniMax used a large number of fraudulent accounts to generate millions of interactions with Claude. The goal wasn’t normal usage. The goal was to mass-produce high-quality question/answer pairs and use those outputs to train their own models.

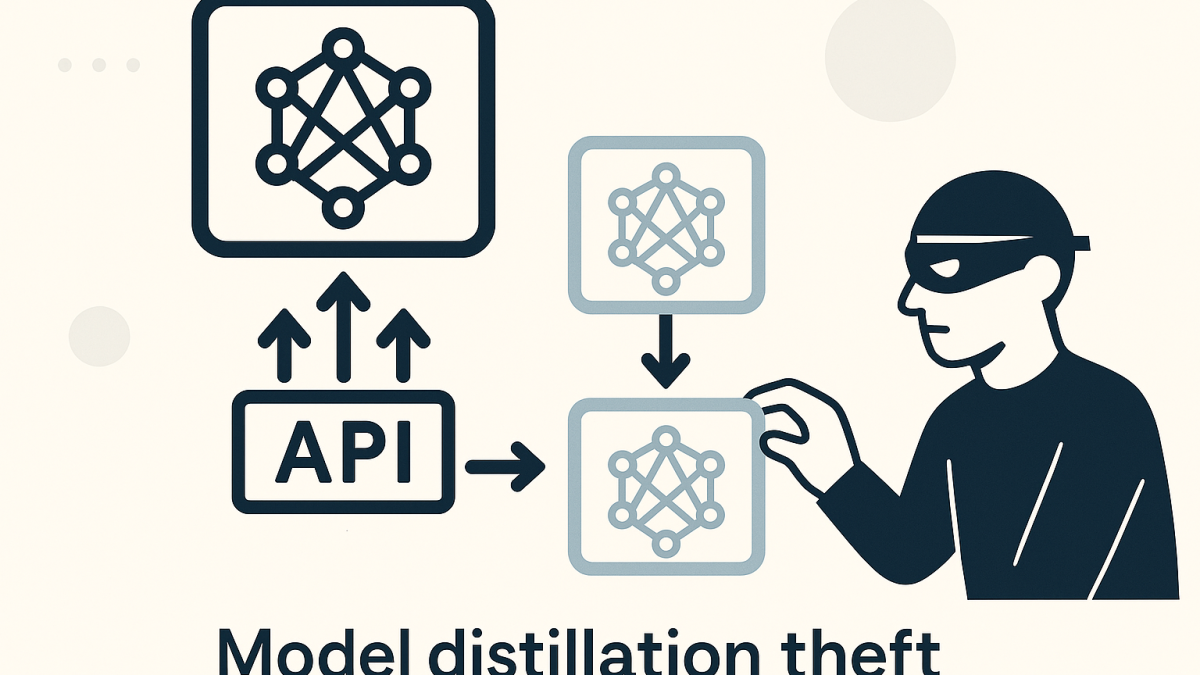

This technique is called distillation. In legitimate settings, distillation is a way to make a smaller, cheaper model learn from a stronger one. In illicit settings, it’s a shortcut: “copy the teacher’s answers” instead of doing the expensive work of training and alignment yourself.

The main technology behind it is distillation and model extraction

Distillation sits in the broader category of model extraction. The attacker doesn’t need your training data or your model weights. They just need enough input/output examples that the victim model’s behaviour can be approximated.

In practice, it looks like this:

- You send many carefully designed prompts to the target model.

- You collect the outputs (and sometimes scores, rankings, or structured rubrics).

- You train your “student” model to reproduce those outputs.

If you can afford enough queries, you can pick off the most valuable behaviours: coding style, tool-use patterns, agent planning, and even safety boundaries. And crucially, you can do it without the same investment in compute, research time, or reinforcement learning.

So how did Anthropic prove it

From what’s been reported, Anthropic’s case wasn’t built on a single magic watermark. It was built the way good incident response is usually built: correlation, behavioural signals, and attribution through infrastructure.

When I read cases like this, I think of it less like “catching one hacker” and more like catching an entire fraud operation. You don’t prove it with one log line. You prove it with a pattern that’s extremely unlikely to occur by accident.

1) Volume and shape of traffic that doesn’t look human

Normal users are spiky and messy. They ask varied questions, they iterate, they abandon threads, they come back days later, and they don’t hammer one narrow capability for weeks.

Distillation traffic is different. It’s repetitive, structured, and high-throughput. It often looks like batch jobs rather than curiosity.

Even before attribution, that alone is a strong indicator you’re looking at extraction rather than usage.

2) Multi-account coordination and “hydra” behaviour

One of the classic ways to defeat rate limits is to spread traffic across many accounts. If a single account is capped at N requests per minute, you create 1,000 accounts and orchestrate them.

This is where a lot of API security programs fail in the real world. They assume “account = user”. Attackers assume “account = disposable token”.

When you see thousands of accounts behaving like one coordinated system—same prompt templates, same timing patterns, same target capabilities—you can treat that like a single actor even if identities differ.

3) Targeting the most differentiated capabilities

In the reports, the alleged prompts were not evenly distributed across “general chat”. They focused on higher-value areas like:

- Agentic reasoning and planning

- Tool use and orchestration patterns

- Coding and debugging workflows

- Attempts to elicit chain-of-thought style reasoning traces

That selection matters. If you were just load testing, you wouldn’t concentrate on the “moat behaviours”. If you were doing product evaluation, you wouldn’t need millions of near-duplicate trials. That narrow targeting strengthens the intent argument.

4) IP, metadata, and infrastructure indicators

Attribution in cloud systems is rarely “the IP address says DeepSeek”. It’s more like:

- IP clusters that map to known proxy or reseller networks

- Repeated TLS/client fingerprints and automation signatures

- Consistent request metadata patterns (headers, SDK usage, timing jitter)

- Cross-correlation with third-party infrastructure observations

This is the same style of work I’ve seen in enterprise threat hunting. You build confidence by layering weak signals until the combined picture is hard to dismiss.

5) “Pivot to the new model” as a tell

One detail that stood out in reporting was the idea that when a new Claude model became available, a large portion of the suspicious traffic shifted quickly to the new model.

That’s not how ordinary customers behave. Ordinary customers don’t instantly retool their workloads, prompt sets, and evaluation harnesses within a day. Extraction pipelines do, because the whole point is to capture the delta—what got better, what changed, what new behaviours appeared.

A simple technical sketch what distillation looks like

Below is a simplified example of how a distillation dataset might be generated. This is not a “how-to steal” guide—it’s intentionally incomplete—but it helps leaders understand why this is a security problem, not just a legal problem.

# Pseudocode: build a dataset of (prompt, response) pairs

# Then fine-tune a student model to imitate the teacher.

prompts = load_prompt_templates(

focus=["tool_use", "agent_planning", "coding"],

variations=100000

)

dataset = []

for p in prompts:

# Teacher is the external frontier model (e.g., Claude)

teacher_response = call_teacher_api(prompt=p)

dataset.append({"prompt": p, "response": teacher_response})

# Student is your internal model

train_student_model(dataset)

If you can automate account creation, spread calls across a proxy network, and run this continuously, you can manufacture a very large imitation corpus surprisingly quickly.

What this means for executives and architects

Most organisations reading this aren’t building frontier models. But plenty are building AI-enabled products, internal copilots, and agent workflows that expose valuable business logic through prompts and responses.

In my experience, the risk isn’t just “someone copies the model.” It’s that someone copies:

- Your process knowledge embedded in prompts

- Your tool orchestration patterns

- Your policy logic and safe-guards

- Your proprietary data transformations (even if you never expose raw data)

And if you operate in Australia, you also have a governance overlay. Essential Eight thinking applies here more than people realise: application control, patching, admin privilege management, and logging aren’t “legacy security”. They’re prerequisites for safe AI operations. The same goes for Australian privacy expectations—AI systems leak in ways traditional systems don’t.

Practical defences that actually help

No silver bullets, but there are realistic controls I’ve seen work in practice.

1) Treat AI APIs as high-value abuse targets

Most rate limiting is too shallow. You need behavioural limits (template repetition, narrow capability targeting, concurrency across accounts) not just “requests per minute”.

2) Instrument prompts and responses like security telemetry

Security teams can’t respond to what they can’t see. You don’t need to store everything forever, but you do need enough telemetry to answer: “Is this normal?” and “Did this suddenly change?”

3) Detect coordinated account swarms

Look for shared fingerprints across accounts: identical prompt scaffolds, identical tool schemas, identical evaluation rubrics, and identical timing patterns. A thousand “different” customers can still behave like one machine.

4) Put friction in account creation and high-volume access

Yes, friction hurts growth. But if you offer high-value model access, you need staged trust: progressive limits, stronger verification for volume, and tighter monitoring of “discount” programs that attackers love to abuse.

5) Assume attempts to elicit reasoning traces

Attackers will try to get the model to show its working. Even if you don’t expose internal chain-of-thought, they’ll probe for consistent intermediate steps they can use as training labels.

From a product standpoint, your safest posture is: provide answers and structured outputs that are useful to customers, while being cautious about giving away stable, high-signal intermediate traces at scale.

An anonymised scenario I’ve seen play out

One organisation rolled out an internal assistant to speed up change management and operational support. It wasn’t a frontier model—just a well-designed orchestration layer over a strong LLM

Within weeks, the assistant became the “easiest way” to extract institutional knowledge, because employees started pasting in standard operating procedures and asking the assistant to summarise and generate runbooks. The assistant wasn’t leaking data externally, but it was quietly becoming a single endpoint where an enormous amount of process IP flowed.

My takeaway wasn’t “ban it”. It was: if this endpoint is now a knowledge concentrator, it deserves the same protection as a finance system. Logs, identity controls, and anomaly detection aren’t optional.

The forward-looking insight

I think we’re heading toward a world where “model security” looks a lot like “fraud prevention” and “bot mitigation” combined. It’s not just about keeping attackers out. It’s about continuously proving that usage patterns are human, legitimate, and aligned to the purpose you intended.

If distillation-at-scale is now part of the competitive landscape, the uncomfortable question for every AI platform team is this: are we building AI capabilities… or are we building an AI capability plus the security program required to keep it ours?