In this blog post How I’d Architect a Safe OpenClaw Evaluation Environment we will walk through a practical way to evaluate OpenClaw safely, without slowing innovation to a crawl.

One pattern I keep running into is this: the moment a tool can act (not just answer), leaders get nervous—and they should. OpenClaw is in that “agent” category where the upside is real, but so is the blast radius if you run it like a normal dev tool.

In my experience, the easiest way to kill innovation isn’t security. It’s unclear boundaries. Teams don’t know what’s allowed, so they either stop experimenting or they experiment in the worst possible place: production-like environments with real data.

What OpenClaw actually is in plain language

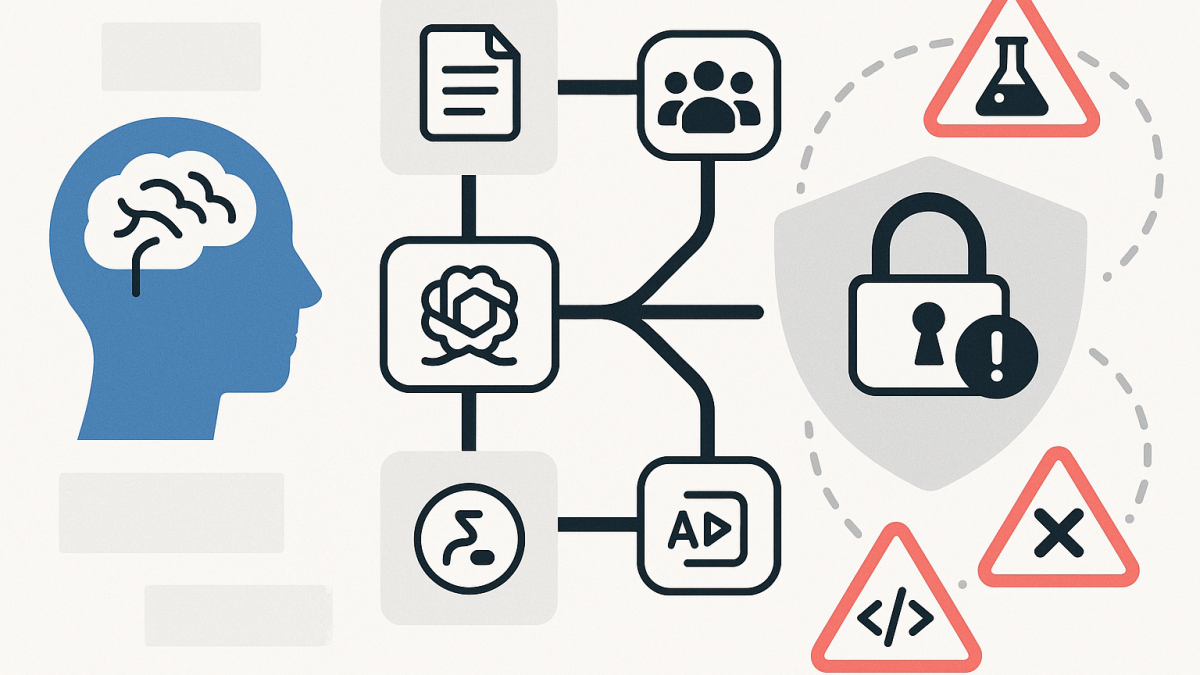

High level, OpenClaw is an open-source AI agent platform. Instead of a single chat window, it connects to channels (like chat apps or collaboration tools) and can call “skills” to do work—read files, hit APIs, automate workflows, and sometimes interact with systems on your behalf.

The key technology shift is this: an LLM (like GPT or Claude) isn’t just generating text. It’s acting as a planner that chooses actions, and a controller that invokes tools. That tool layer is where value happens—and where risk lives.

Why evaluation is uniquely risky for agent tools

When you “evaluate” an agent, you typically give it exactly what it needs to be useful: credentials, integrations, and access to internal context. That’s also exactly what an attacker (or a mistake) would love to get.

And agents have a different failure mode than classic software. A standard app fails in predictable ways. An agent can be pushed off-track by a dodgy instruction, a poisoned document, or an unexpected tool output—and still look confident while doing the wrong thing.

The design goal: fast learning with a small blast radius

My north star is simple: make it easy to experiment, and hard to do damage. That means we optimise for short feedback loops, while architecting containment from day one.

I also assume two things upfront:

- Prompt injection will happen (accidental or intentional).

- Secrets will leak somewhere unless we design for it (logs, config files, shell history, screenshots, and so on).

My reference architecture for a safe OpenClaw evaluation

I typically break this into five layers. Each layer is there to protect you from a different class of mistake.

1) Identity and access: treat the agent like a junior admin with amnesia

For evaluation, I create a dedicated identity boundary. Not a shared “ai-test” account. A real, auditable, least-privileged identity per environment, and ideally per evaluator or team.

Practically, that means:

- Separate tenant / subscription where possible (or at least a separate resource group plus policy guardrails).

- Short-lived credentials (time-bound tokens) rather than long-lived API keys.

- Just enough permissions for the scenario, not “Contributor because it’s easier”.

- No directory-wide read to users/groups unless the use case demands it.

If you’re in Microsoft land, I’m a big fan of making evaluation identities “boring”: no standing admin, no broad Graph permissions, no mailbox access by default. Agents don’t need your whole org chart to prove value.

2) Network containment: default deny, then open what’s necessary

When agents can browse, fetch dependencies, call APIs, and interact with endpoints, network design becomes your seatbelt.

My approach is:

- Run the evaluation in an isolated network segment (separate VNet/VPC).

- Egress control: only allow outbound to approved domains/services.

- No inbound exposure unless there is a clear reason.

- DNS filtering to reduce the chance of calling random infrastructure.

In Australia, I map this back to the ACSC Essential Eight mindset: reduce the attack surface, restrict admin privileges, and harden user applications. Agents are “user applications” with unusually high leverage.

3) Data safety: synthetic by default, anonymised when you must

Most “AI evaluation” failures I’ve seen weren’t exotic hacks. They were basic data hygiene problems: someone connected the agent to a real SharePoint site, a real mailbox, or a real ticketing system “just for a test”.

For OpenClaw evaluation, I use a simple rule:

- Phase 1: synthetic data only (fake invoices, fake contracts, fake customer tickets).

- Phase 2: anonymised samples where business realism matters.

- Phase 3: limited real data with explicit approvals, monitoring, and a rollback plan.

If privacy is in scope (and in Australia, it usually is), I also document where data could transit: model provider calls, telemetry, logs, and third-party skills. It’s much easier to do this at evaluation time than after you’ve built dependency chains.

4) Skill sandboxing: permissions per skill, not per agent

OpenClaw’s “skills” model is powerful because it breaks work into discrete capabilities. That’s also your best lever for safety.

I design skill execution like this:

- Each skill has a permissions manifest (filesystem paths, network destinations, environment variables/secrets).

- Run skills in isolated sandboxes (container isolation at minimum).

- Block dangerous defaults: shell execution, package installs, and arbitrary file writes unless explicitly required.

- Explicit human approval for “high impact” actions (deleting files, sending emails externally, creating users, pushing code).

In practice, I’m trying to stop the “agent does five helpful things, then one catastrophic thing” scenario. The right control isn’t to ban the agent—it’s to gate the irreversible actions.

5) Observability and evidence: you can’t govern what you can’t replay

For decision-makers, the question quickly becomes: “Can we trust it?” The only serious answer is: “We can verify what it did.”

I capture four streams of evidence:

- Conversation trace (inputs/outputs) with retention aligned to policy.

- Tool call audit (what skills ran, with what parameters, and what result).

- System-level logs from the sandbox runtime (process, network, filesystem).

- Change records for any external systems touched (tickets created, commits made, resources deployed).

When something weird happens—and it will—you want a replayable timeline. Not anecdotes. Not screenshots.

A realistic evaluation scenario I’ve used (anonymised)

A Melbourne-based organisation I worked with wanted to see if an agent could reduce time spent on incident triage and post-incident reporting. The fear was obvious: giving an agent access to incident data could create a privacy and security mess.

We set up an evaluation environment with synthetic incidents first. The agent could read “tickets”, propose categorisation, draft a summary, and suggest containment steps—but it couldn’t execute any action in production systems.

Once the team trusted the flow, we introduced a narrow integration to a test instance of their ITSM tool. The agent could create a draft report and attach artefacts, but a human had to approve publishing.

The outcome was what I hoped for: real learning, measurable time savings, and no panic about uncontrolled access. The most valuable finding wasn’t technical—it was governance. The team got clarity on what “safe autonomy” actually meant for them.

Practical steps to stand up the evaluation environment in two weeks

If I were doing this from scratch today, I’d timebox the build and keep it pragmatic:

- Define the top 3 evaluation workflows (not 20). Pick the highest value, lowest risk first.

- Create a dedicated environment boundary (subscription/project + isolated network).

- Implement egress allowlisting and block open internet by default.

- Stand up secrets management and enforce short-lived credentials where possible.

- Adopt “synthetic-first” datasets and document any exceptions.

- Build a skill permission model and require approval for high-impact actions.

- Turn on logging from day one and test your ability to replay an incident.

- Run a deliberate prompt-injection test (e.g., seeded “malicious” documents) to validate controls.

Minimal example of a permissions-first skill wrapper

I’m not trying to turn this into a coding post, but a small pattern helps. When I create evaluation skills, I wrap them with a policy check so the agent can’t “accidentally” use a tool outside the allowed scope.

// Pseudocode: permissions-first skill execution wrapper

function runSkill(skillName, input, context) {

const policy = loadPolicyForSkill(skillName);

// Validate requested network destinations

for (const host of context.requestedHosts) {

if (!policy.allowedHosts.includes(host)) {

throw new Error(`Blocked egress to ${host}`);

}

}

// Validate filesystem access

for (const path of context.requestedPaths) {

if (!path.startsWith) {

throw new Error(`Blocked file access to ${path}`);

}

}

// Require approval for high-impact actions

if {

const approved = requestHumanApproval({ skillName, input });

if (!approved) throw new Error("Not approved");

}

return executeSkillInSandbox(skillName, input, context);

}This isn’t about bureaucracy. It’s about turning “trust me” into “we can prove what happened.” That’s the difference between a cool demo and something leaders will allow near real work.

The takeaway I keep coming back to

OpenClaw-style agents are a glimpse of the next interface for work: not forms and dashboards, but intent-driven automation. That’s exciting, and it’s why teams want to experiment right now.

My view is that the winning organisations won’t be the ones that ban agents. They’ll be the ones that learn fastest inside clear safety boundaries—then expand autonomy deliberately.

If you were setting your own guardrails, what would you treat as “non-negotiable” for an agent evaluation: identity, network, data, or auditing?